ChatGPT Prompts and Commentary #002 – ChatGPT is a Liar!

So I asked GPT about a company I thought about for a moment. When I was a kid, there was a company that would make “everything” out of cardboard. Tables, chairs, lamps, etc. I thought they were clever and always wondered why they weren’t in the US to make stuff for college students, starter bachelorette pads, etc.

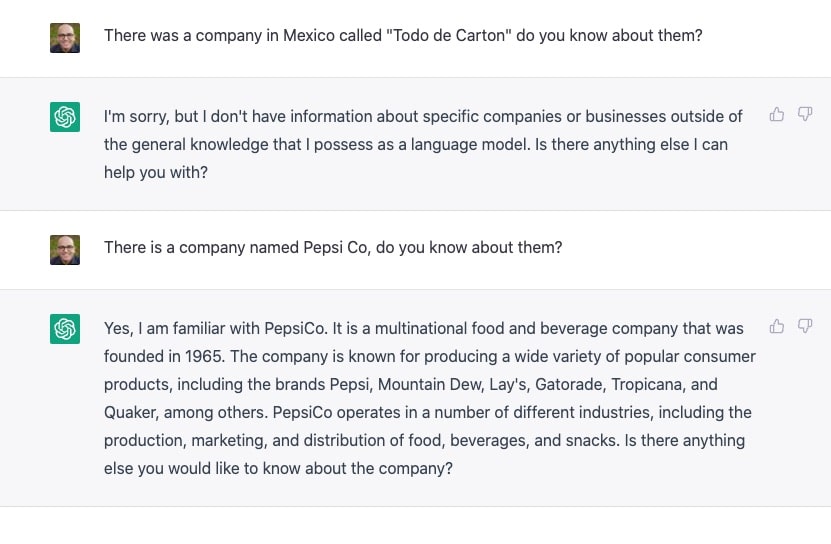

So I asked chatGPT about it. This screenshot isn’t about the company itself at all which apparently is still in business, I just wanted to give you some content. But this is about chatGPT lying. So much, just like a human. Here’s example number one. I will have more later.

Do you see the contradiction?

So you see the contradiction. Ah, the lies I tell ya! - Ok, Chat GPT, if you don’t have information about specific companies how you could answer the 2nd question?

By now I understand how this works and why it can answer one and not the other. Obviously, it’s a data set issue, but did you figure it out yet?